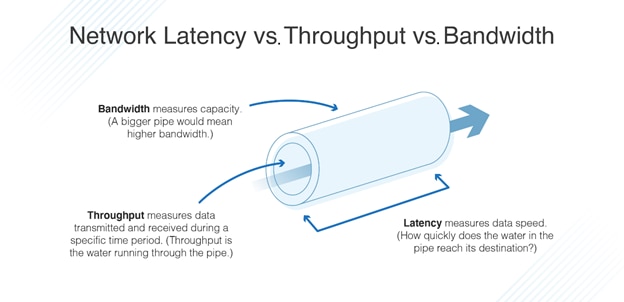

Even a slight increase in latency can cause a significant delay in system performance. A higher latency can cause an increase in webpage load times or disrupt video or audio steams. Having a lower latency means having less of a delay when sending data within a system, making the system run faster and resulting in a positive user experience. When it comes to latency, the lower the latency, the better - meaning as close to 0 as possible. Latency is the amount of time it takes to complete an operation and indicates how long it takes for data packets to travel from one point in a system to another. This concept is measured in units of time, such as milliseconds, microseconds, and nanoseconds. In this blog, we will go over the concepts of latency and throughput, as well as discuss their relationship when it comes to transmitting and processing data in an embedded network or computer system.

These terms are used when discussing the speed of a system and revolves around the process of sending data from one location to the next, indicating the time it takes to process and transmit the data.īoth of these terms are sometimes thought to be interchangeable and while they do have an important relationship with each other, they are quite different in their meanings and how they influence the time to process data. In the world of computers and embedded systems, two common terms we often hear about are latency and throughput.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed